All rights reserved. For enrolled students only. Redistribution prohibited.

First-order systems#

What are we going to cover?#

Introduce first-order (single-variable) linear ordinary differential equations (ODEs)

General solution

Free response and time constants

Forced response under step and sinusoidal inputs

First-order systems#

A first-order (single-variable, i.e., scalar) linear ordinary differential equation (ODE) is represented as

where \(A \in \mathbb{R}\) and \(B \in \mathbb{R}\). Here, \(x(t)\) is the state of the system at time \(t\) and \(u(t)\) is an input to the system at time \(t\). Let \(x_{0} \in \mathbb{R}\) be the initial condition.

You may ask: What makes a differential equation an ordinary differential equation?

What makes an ODE a linear one? Why linear?.

While it does not make much of a difference for first-order systems, the linear system models typically also include an output equation:

Why do we care about first-order systems?#

They are the simplest family of models we will encounter, and we will use them to get a sense of several key concepts we will cover throughout the semester.

Example: Cruise control as a first-order system#

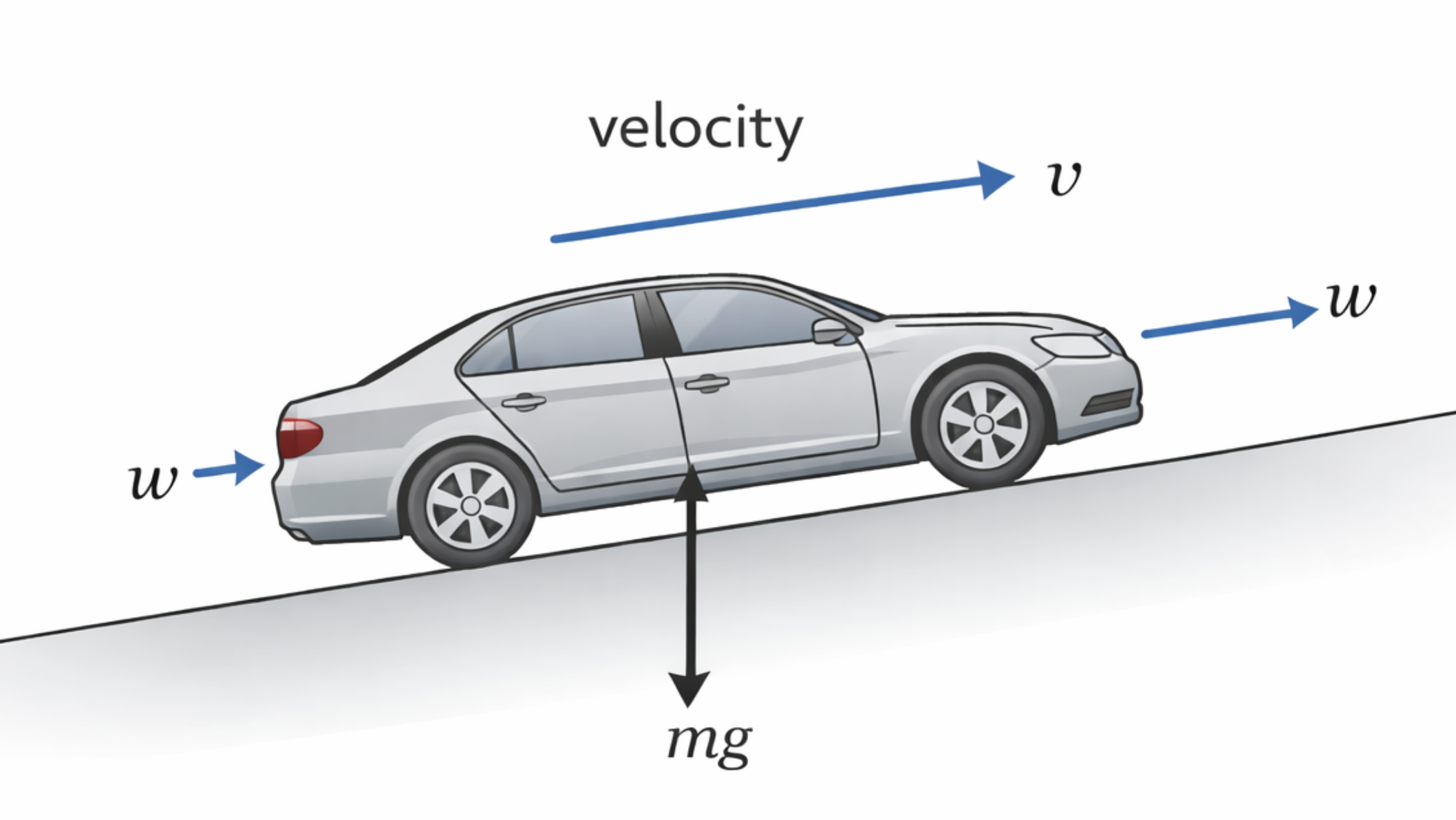

The change in the speed of a car with respect to time can be represented as the first-order, linear ODE

where \(v(t)\) is the speed at time \(t\), \(w(t)\) is the throttle command at time \(t\) (this will play the role of a control input), and the signal \(d\) represents the effects of disturbances (e.g., slope or wind). The constant parameters \(\tau\) and \(K\) capture the physical properties of the vehicle (e.g., mapping throttle to drive force) and the interaction of the vehicle with the environment (e.g., drag and rolling resistance).

For a cruise control system, a common objective is making the difference between a driver-specified desired speed, call it \(v_d(t)\), and the actual speed \(v(t)\) small. Let us introduce a new signal, which we will refer to as the error denoted as \(e\):

For a constant desired speed, \(\dot{v}_d(t) = 0\) for all \(t\geq 0.\) Then, \(\dot{e}(t) = -\dot{v}(t)\) and we can derive the following differential equation.

Our (control) objective will then be keeping \(|e(t)|\) as close to zero as possible.

The ODE for \(e\) includes three inputs: \(u,\) \(d\), and \(v_d\). While we will later work with multiple inputs, for now, let us consider that a control input has already been designed in the form of \(w(t) = k_p e(t)\) and there is no disturbance (i.e., \(d \equiv 0\)). Then, the model boils down to

This model will inform us about the changes in \(e\) with respect to \(v_d\).

By choosing \(x = e\), \(u=w\),

this ODE can be written in the form of the generic first-order system above.

What can we do with a model of a system?#

Predict system behavior by solving the ODE (for some given initial condition and input).

Analyze the system behavior (for all initial conditions and inputs).

Design a controller to influence the system behavior.

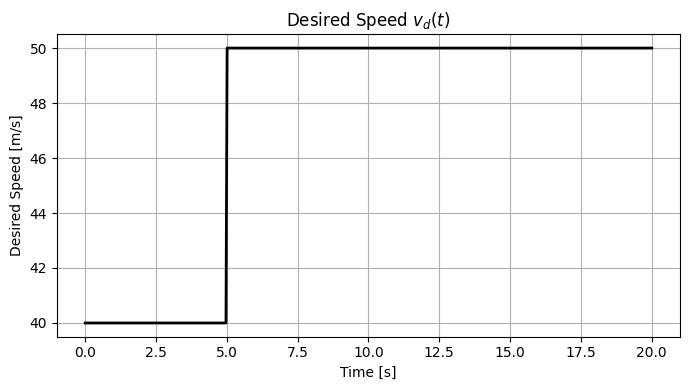

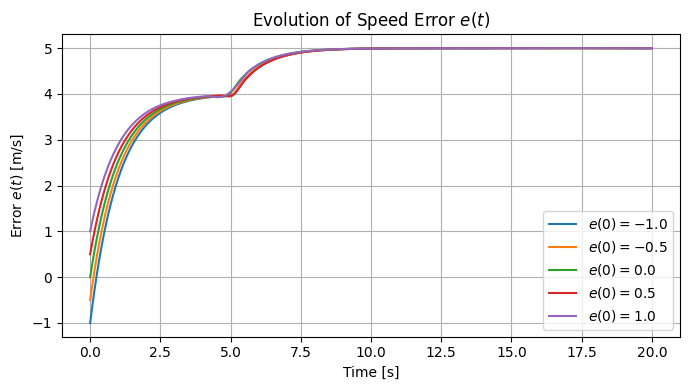

While this class focuses on analysis of systems and design of controllers, simulation the system behavior (for some initial controls and/or inputs) offer visual insights. So, let’s start by simulating the cruise control model.

Simulating the system behavior#

Let us pick some arbitrary values for \(A,\) \(B,\) \(v_d\) and initial conditions and simulate the system behavior.

We will frequently simulate the behavior of the systems we work with, but, let us now go back to our main goal: analysis of system behavior and, eventually, design of controller so that the system we control behaves as we want it to behave.

What does it mean to be a solution to an ODE?#

In a nutshell, a solution for an ODE satisfies “the ODE and its initial condition.” That is, if \(\bar{x}\) is a solution for the input signal \(u\), then

and

Solution of the ODE for a first-order system#

The solution is

We refer to “the solution” here rather than “a solution.” You may wonder whether there is always a solution to an ODE and, when it exists, it is unique.

How do we know that the above (candidate) solution is indeed a solution? Well, check whether it satisfies the conditions for being a solution.

Check the initial condition:

Check whether it satisfies the differential equation:

How did we take the derivative of an integral? Recall: Leibniz integral rule

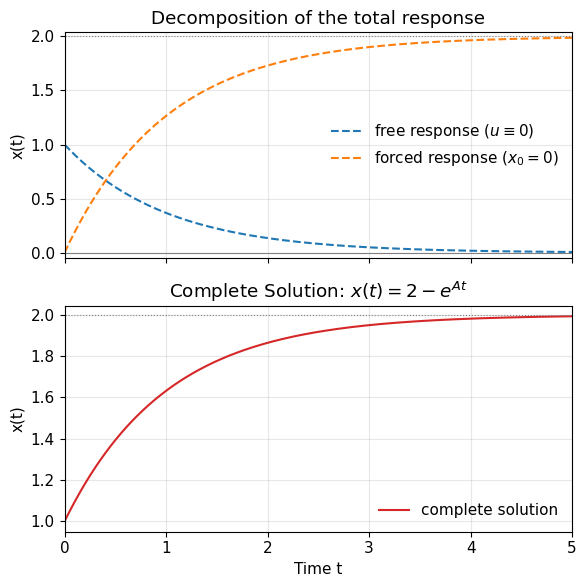

Complete solution = free response + forced response#

Let us take a closer look at the solution:

The so-called free response is

and it does not involve any contribution from input \(u\). It is therefore sometimes also called the unforced response.

The so-called forced response is

and it does not involve any contribution from the initial condition and is solely driven by the input.

We can therefore analyze the free response and forced response separately to reason about the complete solution.

Free response and stability of a system#

The free response (also called the natural response) is the part of the system’s output that arises solely from its initial conditions, with no external input applied.

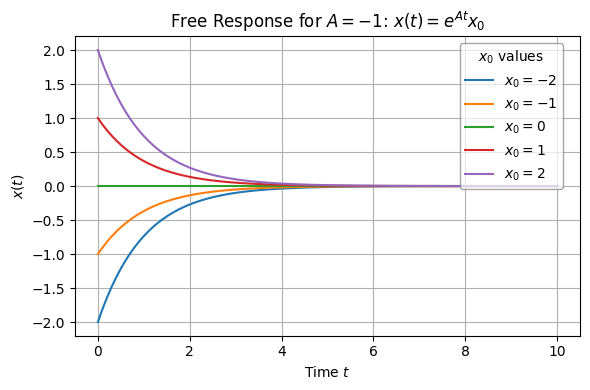

Note that the only system parameter that appears in \(e^{At}x_0\) is \(A.\) Let’s analyze the free response for three cases with respect to the value of \(A.\)

Case 1: \(A < 0\)#

regardless of the value of \(x_0\). We will call the system (asymptotically) stable in this case. The free response converges to \(0\) for all initial conditions.

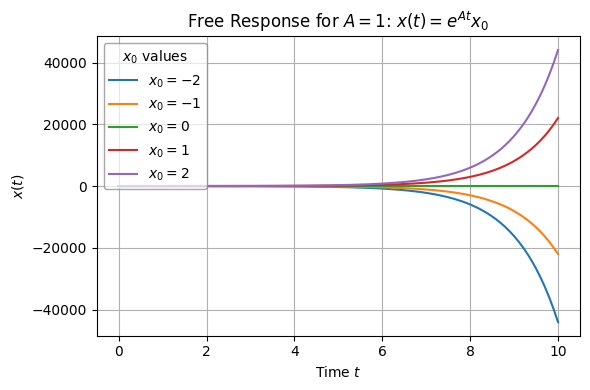

Case 2: \(A > 0\)#

We will call the system unstable in this case. The free response diverges for some initial conditions.

(For \(x_0 = 0\), \(x(t)\) remains at \(0\).)

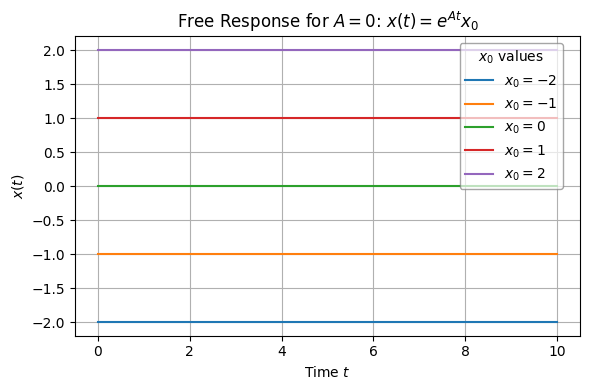

Case 3: \(A = 0\)#

We call the system marginally stable in this case.

Let us now simulate the free response of a linear system for several different values of \(A\) and different initial conditions \(x_0.\)

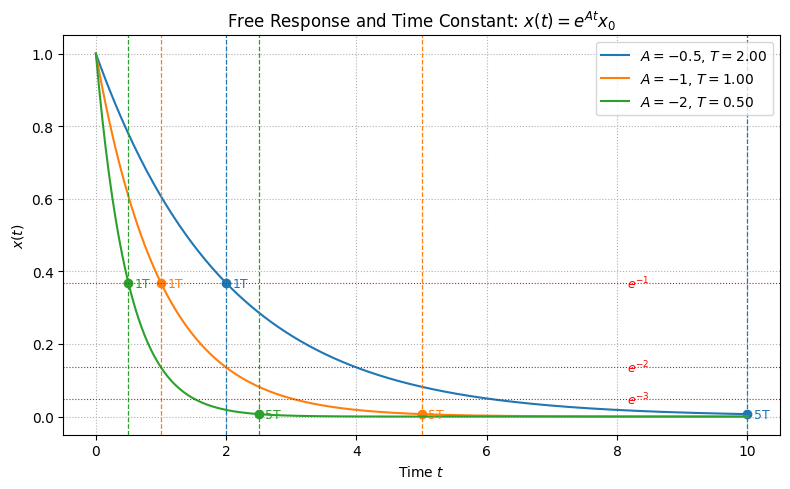

Free response and time constant#

Focus on the case \(A < 0\) (i.e., the system is asymptotically stable).

The time constant \(T\) of the system is defined as

Hence,

Moral of the story: How much the gap from the system state \(x(t)\) is not merely a function of the initial condition and the time that has passed but it is a function of the ratio \(t/T\), i.e., how many time constants amount of time have passed.

Here is more on the practical uses of time constants: Time constants uses

Let’s look at an example.

You may want to pause here and ask yourself how to interpret the figure above. You may also go into the code and change the system parameters and analyze how such changes affect the speed of response.

Forced response#

Recall that the forced response for a first-order system is

It determines how the system reacts to external inputs.

The table below summarizes a comparison between the free response and forced response of a stable system.

The forced response is determined by the input. Therefore, for a better understanding, we will look into several (canonical) input types.

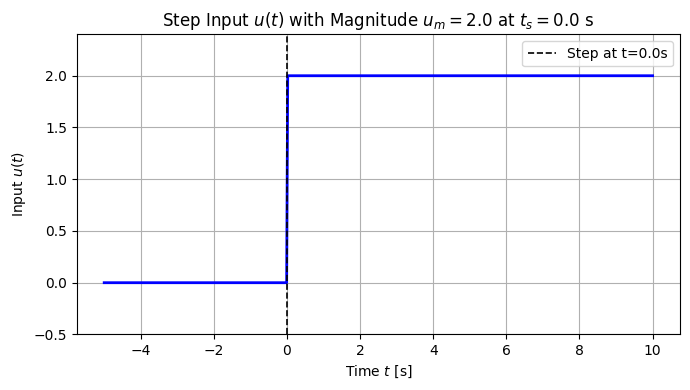

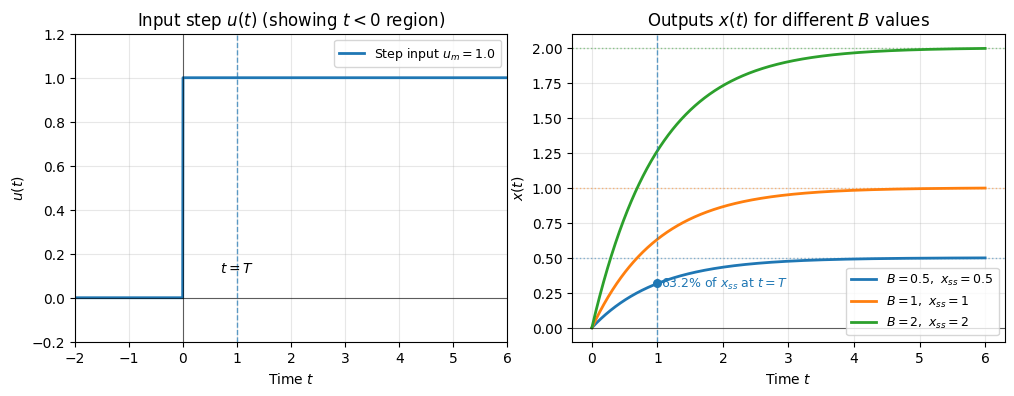

Forced response with a step input (and zero initial conditions)#

Consider the step input with magnitude \(u_m\):

For this step input, the forced response becomes

Then, for a stable first-order system (i.e., A < 0), \(e^{At}\) approaches zero and \(x_{forced}\) approaches a so-called steady-state value as the \(t\) grows:

and the steady-state gain (from the input to the state) for the system under step input is

Similar expressions can be obtained for the output:

and the steady-state gain from the step input to the system output s

The forced response approaches its steady-state exponentially:

Recall the time constant is

At \(t = \tau\), the state reaches about 63.2% of its final value:

Complete solution = free response + forced response (under step inputs).#

The complete solution for a step input with magnitude \(u_m\) (applied at \(t=0\)) and an initial condition \(x_0\) is

and

Let us look at an example.

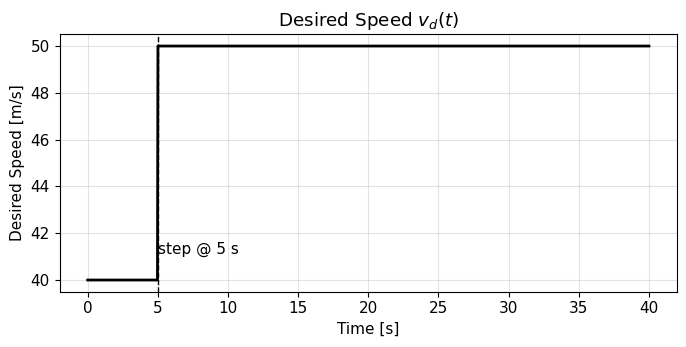

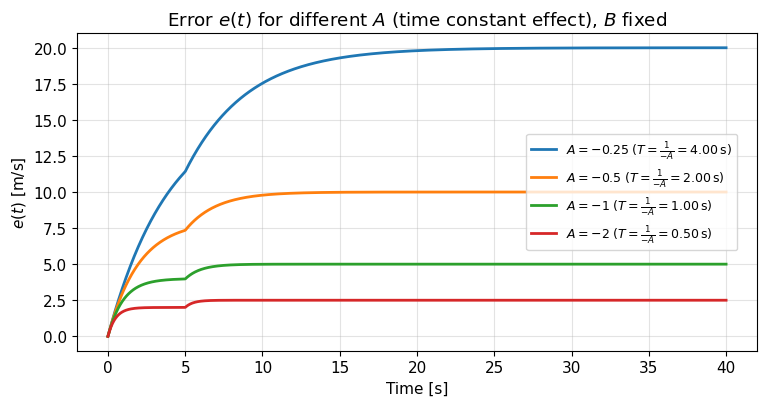

Revisit the cruise control example#

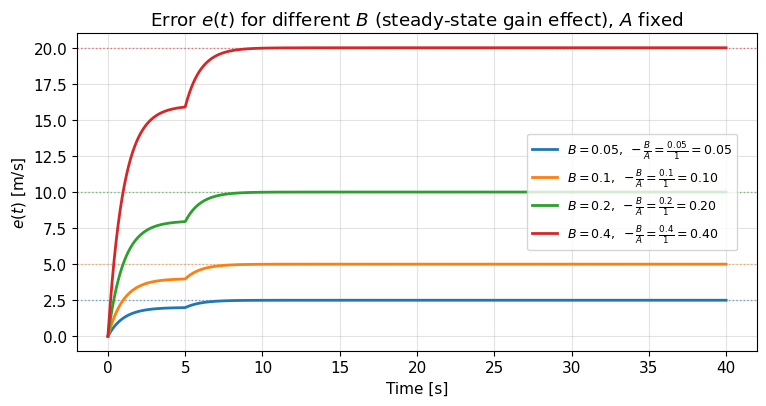

The code and the resulting figures below demonstrate the effect of the variables time constants and stead-state gains on the system response.

You may want to take some time to interpret the figures. Here are some guiding questions: (i) What value does the response settle toward? (ii) How fast does it do that and what determines the speed?

Response under sinusoidal inputs#

Next, we will derive the response of a first-order linear system under sinusoidal inputs. We will derive this response through a seemingly unintuive way for convenience. We will derive the response first for complex-valued input signal of the following form:

where \(\bar{u}\) is a fixed, complex number, \(\omega\) is a fixed, real number, and \(j\) is the imaginary unit, i.e., \(j = \sqrt{-1}\).

Facts about complex numbers#

Why are we interested in this complex-valued signal of time? Because it is directly related to (real-valued) sinusoidal signals (and it is easy to work with). To see the relation, let’s recall the Euler’s formula:

for any real-valued \(\theta.\) Accordingly, the following holds:

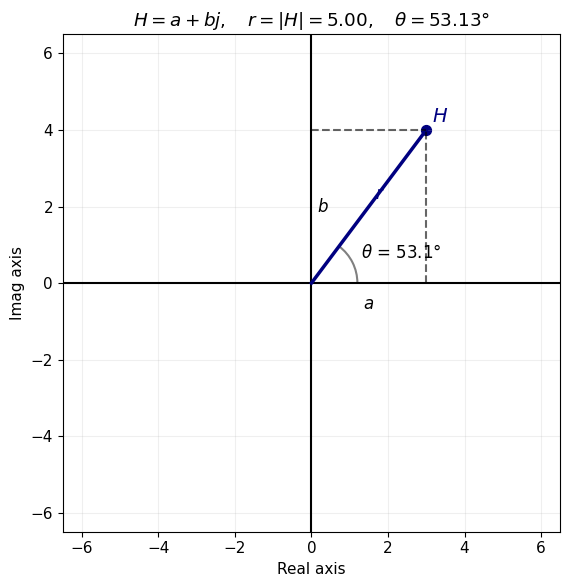

Recall that every complex number can be written in its polar form and its Cartesian form.

Cartesian form: \(H = a + j b\) where \(Real(H) = a\) and \(Imaginary(H) = b.\)

Polar form: \( H = r e^{j\theta}\) where \(r\) is the length and and \(\theta\) is the angle.

Here is an example plotted for \(H = 3 + 4j = 5 e^{j 0.93}\) (note: \(0.93 \text{ radians } \approx 53.13^o\)).

Saved plot as H_complex_plot.png

You can also play with the demo below to convince yourself that one can go back and forth between the two representations of complex numbers.

[Info] GUI unavailable (no display name and no $DISPLAY environment variable). Switching to CLI mode.\n

Complex Number Converter — Cartesian ↔ Polar

--------------------------------------------

Running in CLI mode (no GUI display detected).

Choose input form:

[C] Cartesian (a + bj)

[P] Polar (r, θ)

Your choice (C/P): C

Real part a: 3

Imag part b: 2

Polar form:

r = 3.605551

θ = 0.588003 rad (33.690068°)

The relation between complex-valued exponential signals and real-valued sinusoidal signals#

Let us now focus on the case where \(\theta = \omega t\) for \(\omega\) is a fixed, real number and \(t\) denotes an indeterminate variable (e.g., the time in our case).

Then, the Euler formula takes the form:

Recall another fact: \(|e^{j \omega t}|=1\) for every \(\omega\) and \(t\), i.e., on the complex plane \(e^{j \omega t}\) is on the unit circle. The following animation shows how this point moves on the unit circle and how its real and imaginary parts map to sinusoidal signals of time.

This script is for fixed \(\omega\). Experiment with different values of \(\omega\) in the script and check its impact. Can you see why we will refer to \(\omega\) as “frequency”?