All rights reserved. For enrolled students only. Redistribution prohibited.

Frequency response and transfer functions#

What will we cover?

Steady-state response under exponential and sinusoidal inputs

Transfer functions

Bode plots

Poles and zeros (and eigenvalues)

Block diagram algebra

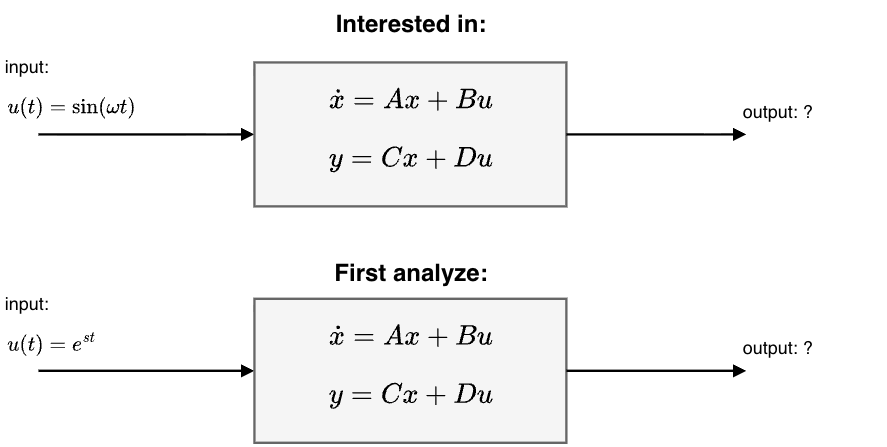

Response of a linear system under sinusoidal inputs#

We are now interested in the response (solution) of a linear system

with \(A \in \mathbb{R}^{n \times n}, B \in \mathbb{R}^{n \times 1}, C \in \mathbb{R}^{1 \times n}\) and \(D \in \mathbb{R}\) under sinusoidal inputs, e.g., \(\sin(\omega t)\) or \(\cos(\omega t)\).

Instead we will first analyze the response under inputs of the form of

with \(s \in \mathbb{C}\) and \(\bar{u} \in \mathbb{C}\).

Why care about \(e^{st}\)?#

Exponentials generate sinusoids: Using Euler’s identity, \( e^{j\omega t} = \cos(\omega t) + j\sin(\omega t),\)we see that sinusoidal signals can be represented using complex exponentials.

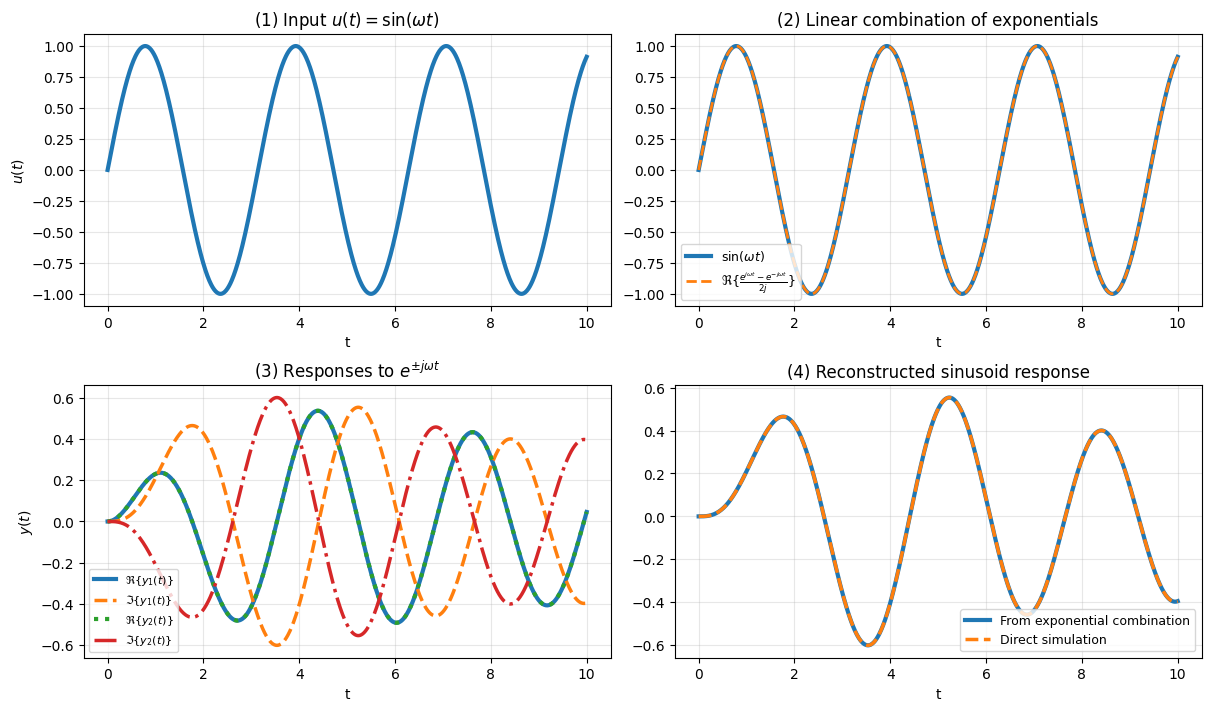

Here is a concrete example.

Thus, a sinusoidal input is a linear combination of complex exponentials with

Because the system is linear, if we know the response to \(e^{s_1 t}\) and \(e^{s_2 t}\), then we automatically know the response to any linear combination:

Thus, by understanding exponential inputs, we can understand a wide range of signals, including:

sinusoids,

damped oscillations,

and combinations of oscillatory modes.

Response to an exponential input#

Assume that all eigenvalues \(\lambda_i\) of \(A\) satisfy

Consider an exponential input of the form

where \(\bar u \in \mathbb{C}\) and \(s \neq \lambda_i(A)\) for all \(i\).

The state solution is

Substituting \(u(\tau) = \bar u e^{s\tau}\) gives

Factor out \(e^{At}\):

Combine exponentials

Thus,

Since \(sI - A\) is invertible (because \(s\) is not an eigenvalue of \(A\)),

Substituting back,

Using \(e^{At} e^{(sI-A)t} = e^{s t},\) we obtain

With \(y(t) = Cx(t) + D u(t)\), we get

Steady-state response under exponential inputs#

Because the system is stable, the term involving \(e^{At}\) decays to zero as time increases.

At steady state, only the forced term remains. Therefore,

For stable linear systems,

where

This function (of s) is call the transfer function for the system.

Observations:

The steady-state output has the same exponential form as the input.

The system acts as a complex gain evaluated at \(s\).

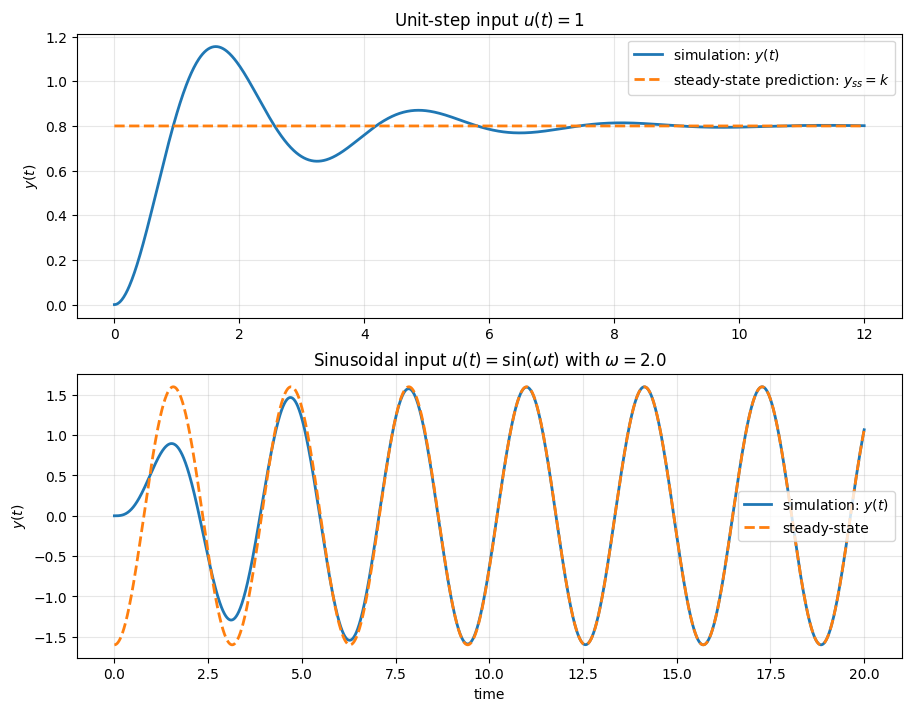

Example: Damped linear oscillator#

Consider the second-order system written in state-space form

with

For \(\zeta>0\), this is a stable damped oscillator.

The transfer function from \(u\) to \(y\) is

A unit step is the exponential input with \(s=0\) (since \(e^{0t}=1\)), so the steady-state output is

Write

By linearity, the steady-state output is the same linear combination of the corresponding steady-state responses:

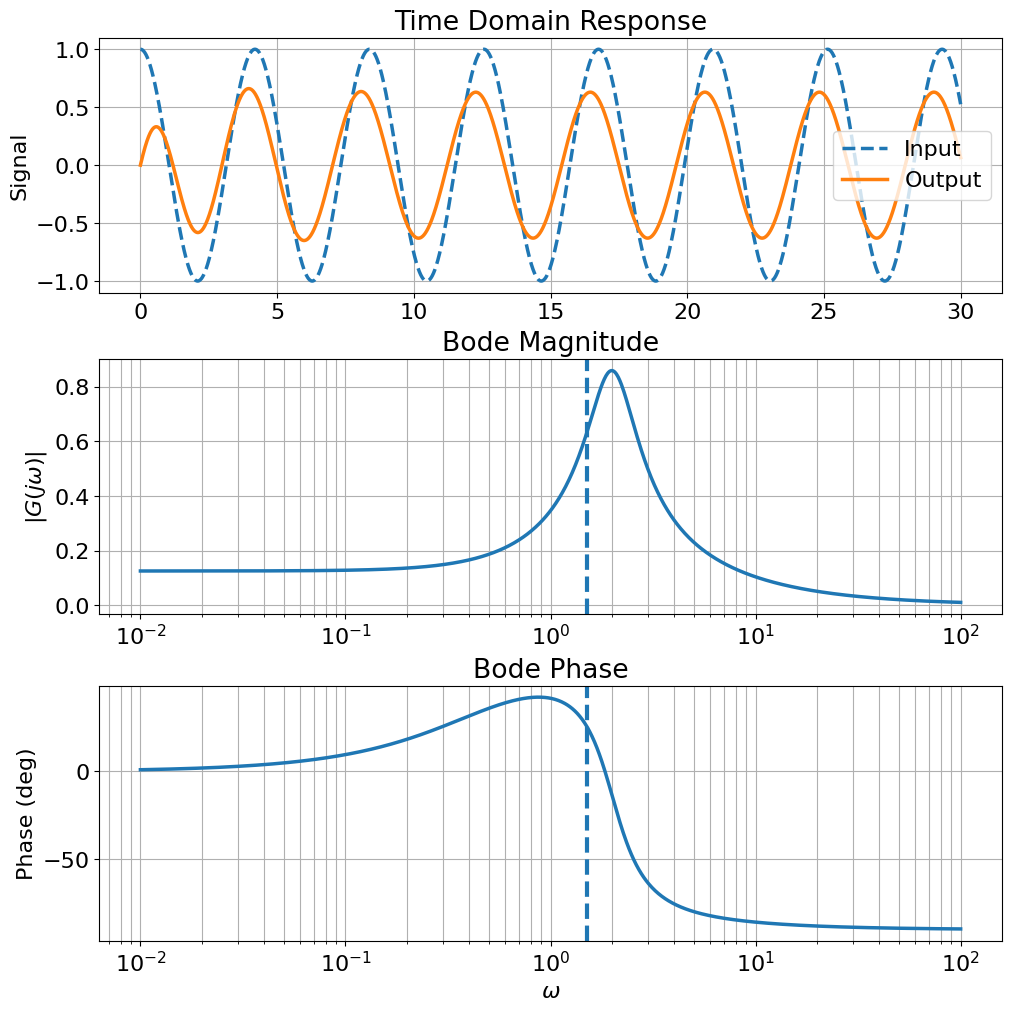

Below, we compute both the simulated response and the predicted steady-state response, and plot them together.

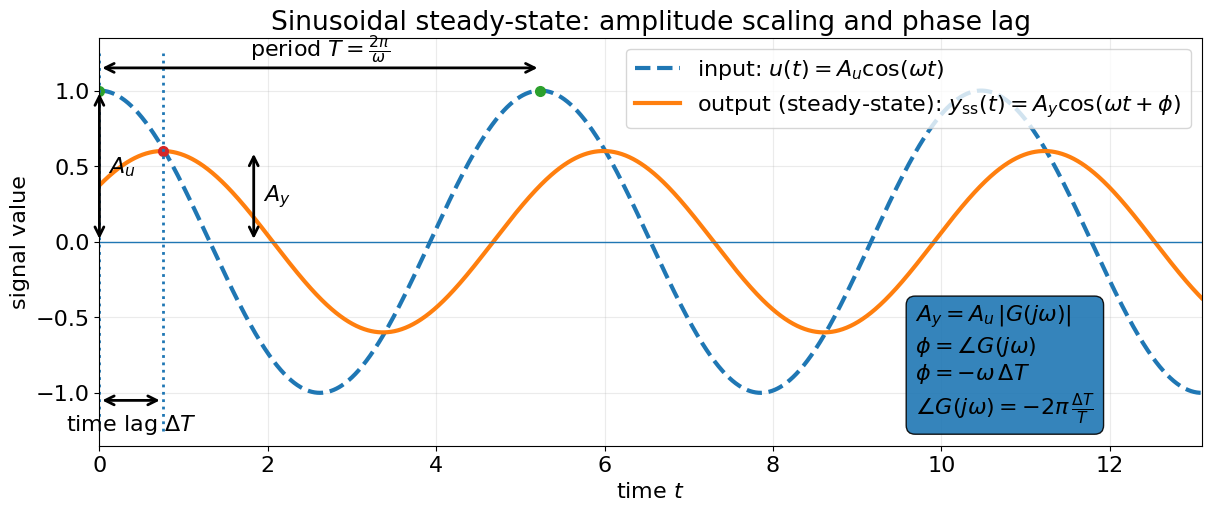

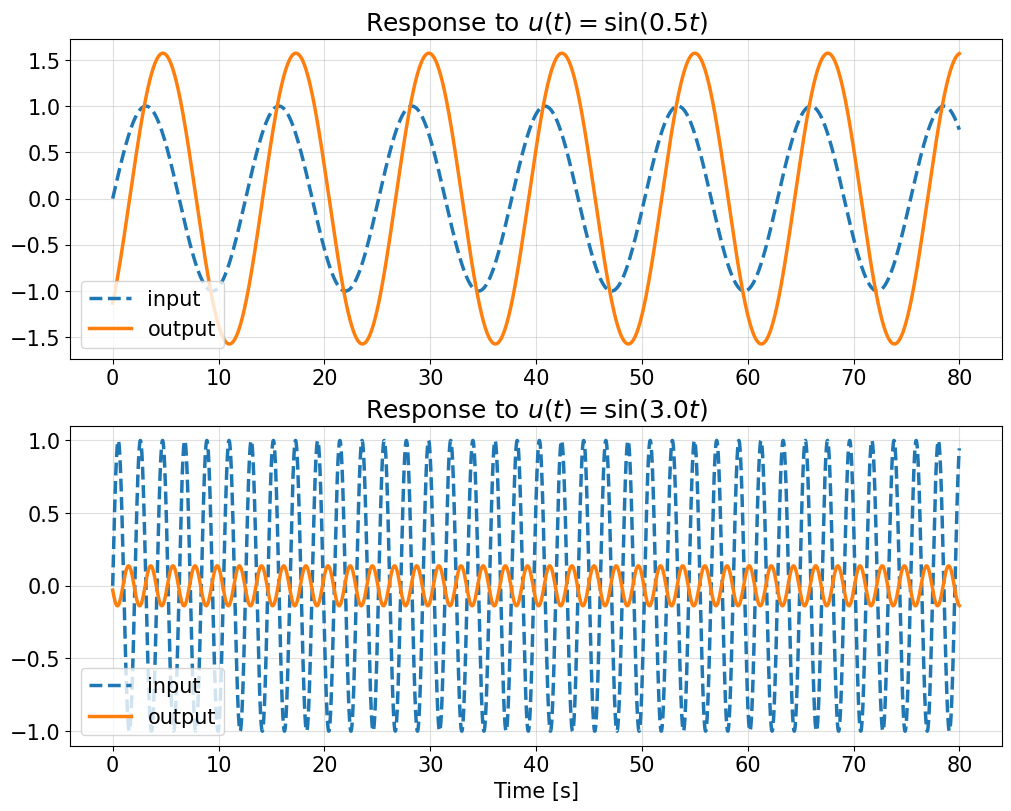

Steady-state response to sinusoidal inputs#

Consider a stable linear time-invariant system with transfer function \(G(s)\). Let the input be

Using Euler’s identity,

we can write

Because the system is linear and stable, the steady-state response to the complex exponential input

is

Taking the real part gives the steady-state output:

Now write

Then

Thus, the output sinusoid has:

the same frequency \(\omega\),

amplitude scaled by \(|G(j\omega)|\),

and phase shift \(\angle G(j\omega)\).

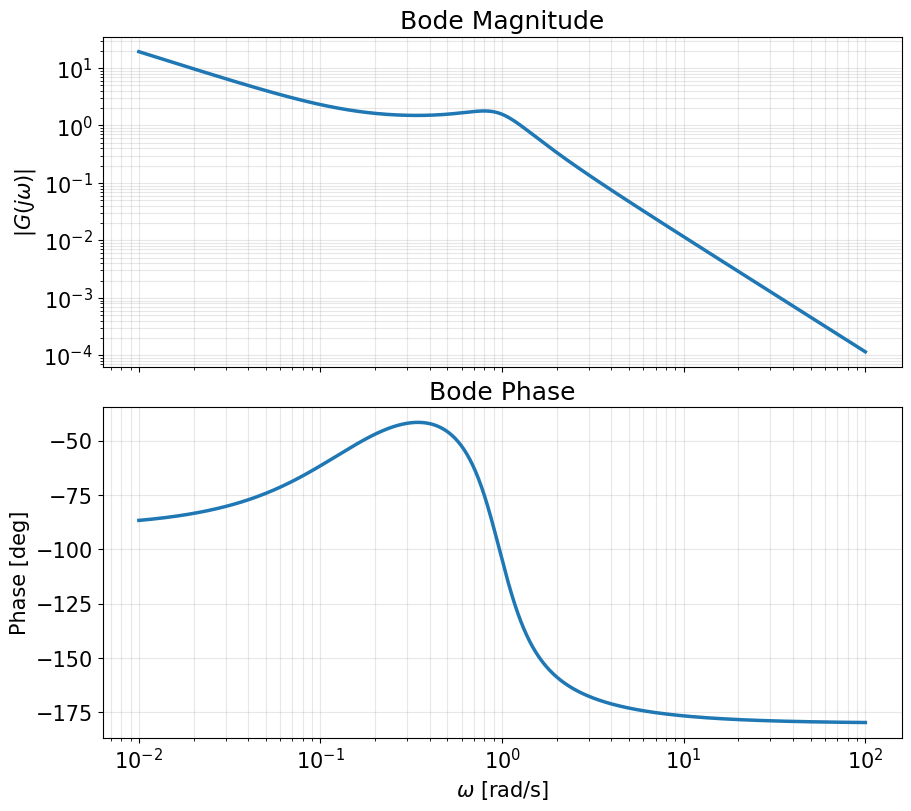

Aircraft Pitch Model#

We now consider the longitudinal pitch dynamics model presented in the University of Michigan Control Tutorials for MATLAB and Simulink (CTMS):

https://ctms.engin.umich.edu/CTMS/?example=AircraftPitch§ion=SystemModeling

The model represents small perturbations about steady cruise flight and describes the pitch motion of an aircraft.

Under standard linearization assumptions (small angles, constant speed, decoupled longitudinal dynamics), the system is written in terms of:

\(\alpha\) : angle of attack

\(q\) : pitch rate

\(\theta\) : pitch angle

The control input is the elevator deflection \(\delta\).

The longitudinal pitch model is

For this model:

The input is the elevator deflection \(\delta\).

The output is the pitch angle \(\theta\).

We may express the linearized system compactly as

with

and

Hence

Compute

Therefore,

Carrying out the matrix multiplication (and using \(D=0\)) yields a third-order transfer function

Shortcuts for obtaining the transfer function#

Consider a linear time-invariant input–output system written in ODE form:

where \(y(t)\) is the output and \(u(t)\) is the input.

Key observations

For linear systems, if the input is an exponential

then at steady state the output must also be of the form

for some scalar \(y_0\) (provided \(s\) is not a root of the characteristic polynomial \(s^n + a_1 s^{n-1} + \cdots + a_n\)).

If \(u(t) = e^{st}\), then

and similarly,

Plugging \(u(t)=e^{st}\) and \(y_{ss}(t)=y_0 e^{st}\) into the differential equation gives

Cancel \(e^{st}\):

Solving for \(y_0\),

Since \(y_{ss}(t) = y_0 e^{st}\) and \(u(t)=e^{st}\), we obtain

The denominator \(a(s)\) is the characteristic polynomial of the ODE (or, in short, of the system).

Examples: ODE ↔ Transfer Function#

In the following examples, substitute \(y_{ss} = y_0 e^{st}\), \(u = e^{st}\) in the given ODEs and isolate \(y_0\) to obtain an expression for the transfer function from \(y\) to \(y\).

Integrator: \( \dot y = u.\)

Derivative: \( y = \dot u.\)

First-order system: \( \dot y + a y = u.\)

Double integrator: \( \ddot y = u.\)

Second-order system: \( \ddot y + 2\zeta\omega_n \dot y + \omega_n^2 y = u.\)

PID controller: \( y = k_p u + k_d \dot u + k_i \int u\,dt.\)

Example: Linearized balance system#

Consider the linearized model of a cart–pendulum (balance) system with the variables

\(p\) = cart position

\(\theta\) = pendulum angle

\(F\) = applied force .

The linearized differential equations are

Here \(M, m, \ell, J, c, \gamma, g\) are physical parameters.

If the input is of the form

then all signals will reach steady-state of the form

Substituting into the ODEs gives

Rearrange:

This can be written compactly as

Let

Expanding gives

The transfer function from force \(F\) to angle \(\theta\)

The transfer function from force \(F\) to position \(p\)

Transfer function from F to theta:

l⋅m⋅s

─────────────────────────────────────────────────────────────────

3 2 2 2 2 3

J⋅M⋅s + J⋅c⋅s - M⋅g⋅l⋅m⋅s + M⋅γ⋅s - c⋅g⋅l⋅m + c⋅γ⋅s - l ⋅m ⋅s

Transfer function from F to p:

2

J⋅s - g⋅l⋅m + γ⋅s

─────────────────────────────────────────────────────────────────────

⎛ 3 2 2 2 2 3⎞

s⋅⎝J⋅M⋅s + J⋅c⋅s - M⋅g⋅l⋅m⋅s + M⋅γ⋅s - c⋅g⋅l⋅m + c⋅γ⋅s - l ⋅m ⋅s ⎠

Common denominator Δ(s):

3 2 2 2 2 3

J⋅M⋅s + J⋅c⋅s - M⋅g⋅l⋅m⋅s + M⋅γ⋅s - c⋅g⋅l⋅m + c⋅γ⋅s - l ⋅m ⋅s

Expanded denominator:

3 2 2 2 2 3

J⋅M⋅s + J⋅c⋅s - M⋅g⋅l⋅m⋅s + M⋅γ⋅s - c⋅g⋅l⋅m + c⋅γ⋅s - l ⋅m ⋅s

--- Direct determinant method ---

G_theta:

<TransferFunction>: sys[30]

Inputs (1): ['u[0]']

Outputs (1): ['y[0]']

0.1 s^2

----------------------------------------------

-0.004 s^4 + 0.0506 s^3 - 0.976 s^2 - 0.0981 s

G_p:

<TransferFunction>: sys[31]

Inputs (1): ['u[0]']

Outputs (1): ['y[0]']

0.006 s^2 + 0.05 s - 0.981

----------------------------------------------

-0.004 s^4 + 0.0506 s^3 - 0.976 s^2 - 0.0981 s

--- State-space method ---

G_theta:

<TransferFunction>: sys[35]

Inputs (1): ['u[0]']

Outputs (1): ['y[0]']

-2.487e-14 s^2 - 25 s

-------------------------------

s^3 - 12.65 s^2 + 244 s + 24.52

G_p:

<TransferFunction>: sys[37]

Inputs (1): ['u[0]']

Outputs (1): ['y[0]']

-1.599e-14 s^3 - 1.5 s^2 - 12.5 s + 245.2

-----------------------------------------

s^4 - 12.65 s^3 + 244 s^2 + 24.52 s

Poles and zeros of a transfer function#

If a transfer function is written as

then

Zeros of \(G\) are the roots of

\[ b(s) = 0, \]Poles of \(G\) are the roots of

\[ a(s) = 0. \]

Example

Consider the transfer function

The zeros are the roots of the numerator

The poles are the roots of the denominator

i.e.,

Example

Consider the transfer function

The zeros are the roots of the numerator

The poles are the roots of the denominator

Factor:

so

Notice that the factor \((s+2)\) appears in both the numerator and denominator. This means there is a “pole–zero cancellation” at \(s = -2\).

After cancellation, the transfer function becomes

Poles and Eigenvalues#

Consider a state-space model

with transfer function

Using the identity

we can write

Therefore, the denominator of \(G(s)\) is given by

By definition, the eigenvalues of \(A\) are the roots of

Hence,

In general, this statement holds up to possible cancellations between the numerator and denominator. When no such cancellations occur, the poles of the transfer function exactly match the eigenvalues of \(A\).

Interpretation

The matrix \(A\) determines the internal dynamics of the system.

The eigenvalues of \(A\) determine how the state evolves.

These same values appear as the poles of the transfer function, which determine the input–output behavior.

Thus, poles provide a direct link between state-space dynamics and transfer-function representations.

Example

Consider the state-space system

The eigenvalues of \(A\) are

Compute

We have

Thus

Multiplying by \(C\),

Combine into a single fraction:

Recap:

Eigenvalues of \(A\): \(-1\), \(-2\)

Poles of \(G(s)\): \(-1\), \(-2\)

Example

Consider the state-space system

The matrix \(A\) has the eigenvalues \(-1\) and \(-2\).

Transfer function (after a pole-zero cancellation) is

which has only one pole at \(s=-1\).

Steady-state gain for step inputs using the transfer function#

Consider a linear input–output system written in ODE form:

The corresponding transfer function is

Now suppose the input is a constant (step) input

If the system is stable, then the output converges to a constant steady-state value

At steady state, all derivatives of \(y(t)\) are zero, and all derivatives of \(u(t)\) of order \(1\) or higher are also zero. Substituting into the ODE gives

Therefore,

This ratio is the steady-state gain from a step input to the output.

Evaluating the transfer function at \(s=0\) gives

Therefore, for a stable system, the steady-state gain from a constant input to the output is

If the same system is written in state-space form

then

Evaluating at \(s=0\) gives

Thus, for a stable system, the steady-state gain from a step input to the output is

Block diagram algebra#

Transfer functions combine in simple ways under standard block-diagram interconnections.

We will consider three different basic interconnections.

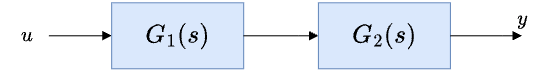

Serial interconnection

Suppose two systems are connected in series:

If the input is \(u(t)=e^{st}\), then the output of the first block is

and the output of the second block is

Therefore, the overall transfer function from \(u\) to \(y\) is

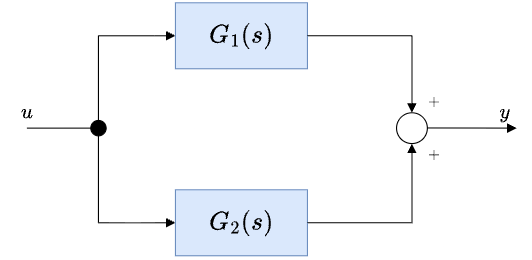

Parallel interconnection

Suppose the same input \(u\) is applied to two systems in parallel, and the outputs are added:

If \(u(t)=e^{st}\), then

Hence,

Therefore, the overall transfer function is

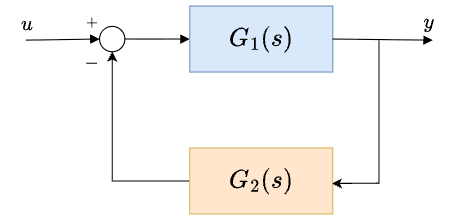

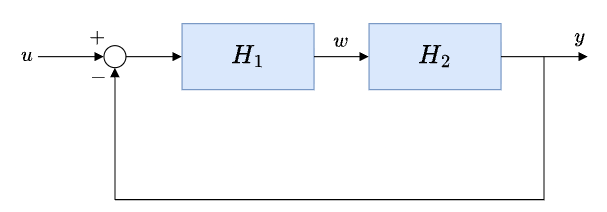

Feedback interconnection

Consider the feedback interconnection

Substituting the error signal into the forward path gives

Rearranging,

so

Therefore, the closed-loop transfer function from \(u\) to \(y\) is

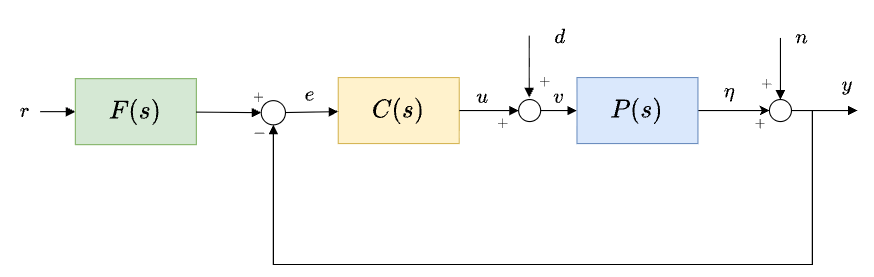

Example (of block diagram algebra)#

We proceed by following the signals in the block diagram step by step.

From the first summing junction,

From the diagram:

Controller:

Disturbance enters before the plant:

Plant output (before measurement noise):

Measurement noise:

Start from

Substitute \(v = u + d\):

Now substitute \(u = C e\):

Recall

Substitute \(y\):

Expand:

Group terms:

Divide through:

The error is composed of three contributions:

where

Important observation:

The term \(\frac{1}{1+PC}\) appears in all closed-loop transfer functions.

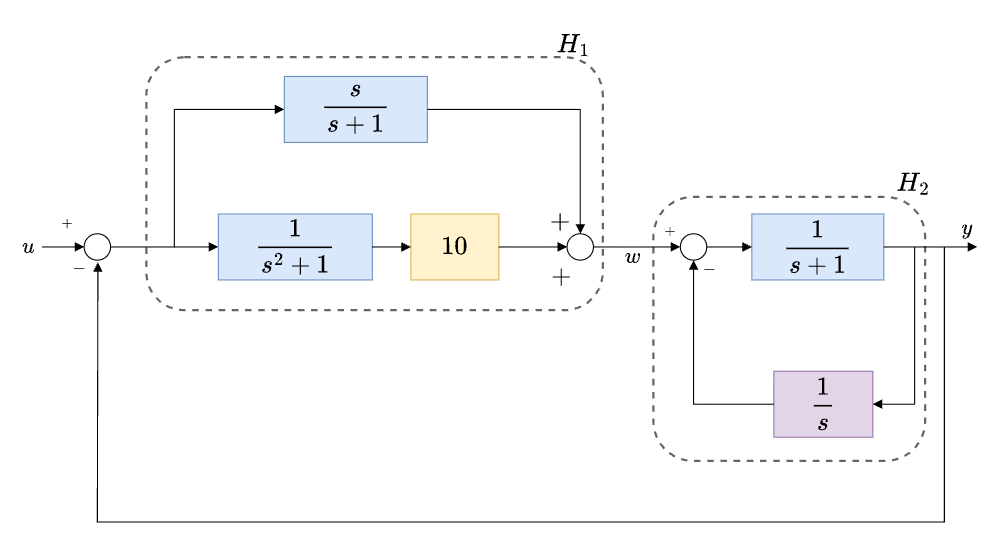

Example#

For the system shown, compute the transfer functions

from \(u\) to \(y\),

from \(u\) to \(w\).

We will use the intermediate subsystems \(H_1\) and \(H_2\) to simplify the derivation.

Compute \(H_1\)

The subsystem \(H_1\) has two parallel branches from its input to the signal \(w\):

upper branch:

lower branch:

Since these two branches are in parallel,

This can be written over a common denominator as

Compute \(H_2\)

The subsystem \(H_2\) is a negative-feedback interconnection with

forward path:

feedback path:

Therefore,

Simplifying,

Outer feedback loop

Once \(H_1\) and \(H_2\) are computed, the overall diagram can be simplified to the following feedback interconnection.

The transfer function from \(u\) to \(y\) is then

Similarly, the transfer function from \(u\) to \(w\) is